For organizations with production and test databases (you do have a test environment, don't you?), copying updated production data into the test environment can be cumbersome. Rather than having to back up the production data and restore it into the testing database, you can use the datapump command line tool.

Simple Datapump

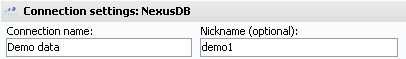

VSys.exe and VSys.ini are located.VSys.exe datapump:source,targetsource is the production database's name or nickname, and target is the test database's name or nickname. VSys.exe datapump:source,target,table1,table2,table3source is the production database's name or nickname, target is the test database's name or nickname, and the tableX options (one or more of them) are the names of the tables to be copied. If no tables are specified then all tables are copied.-". For example,VSys.exe datapump:source,target,-trace,-voxilogs,-zipstrace, voxilogs and zips.After moving each table, VSys will check the source and target rows against each other as a sanity check; VSys calls these "row hashes". This can be slow; see "Row hashes" below to disable it if appropriate.

Datapump with a settings file

Datapump can also be launched using a specially-formatted XML file. The command prompt syntax is:

VSys.exe datapump:settings:c:\VSys\filename.xml

or, to include the source and target connection names on the command line, VSys.exe datapump:source,target,settings:c:\VSys\filename.xml

The settings XML should be formatted as in:<DATAPUMP Source="sstemp2" Target="temp2" NoValidateRowHashes="0" SkipLameTrace="0" TransactionMultiplier="0" NoChangeRecovery="0"> <TABLES>address, appFields, applications, assignments, attachments, availability, availGroups,awards, blacklist, certifs, comments, courses, creds, entries, events, groups, hours, interviews, letters, lists, locations, mandates, memberships, money, nindex, notifications, options, optionValues, people, relationships, reports, schedule, slots, surveys, tags, trace, training, transitions, webapps, webpends</TABLES> <FILTER Table="trace" MinDate="2014-01-01"/> <FILTER Table="letters" MinDate="2014-01-01"/></DATAPUMP>

Source and Target, if provided, override the equivalent command line parameters.<TABLES> to indicate the table names to be pumped, separated by commas. If none are provided, all tables will be processed; does not support the "-" (exclude) syntax.<FILTER> elements limit the records moved from specific tables to only those on or after the given date. Tables supporting date-based filtering are: attachments, letters, trace, notifications, transitions and voxilogs. Filters specified for any other tables will be ignored.SkipLameTrace="1" applies a specific filter to trace records: actions such as "lock", "unlock", "E-mail Robot heartbeat", etc. are skipped.NoChangeRecovery="1" tells VSys, for SQL Server target databases, not to change the recovery model of that database to "SIMPLE" before pumping data in, and then back to its original state when done.Row hashes

Disable checking of row hashes for the entire process with NoValidateRowHashes="1" in the settings XML file. Or put

[Magic] DatapumpNoValidateRowHashes=1

into the VSys.ini file.

To disable them for individual tables,

<DATAPUMP... NoRowHashes"trace,voxilogs,voxisessions"...>

Having issues with date/time values not matching up? This occasionally happens when moving from PostgreSQL or an older SQL Server to SQL Server 2017 where the rounding of time values between different systems causes a hash mismatch. In your settings XML file, put <DATAPUMP... RowHashesTimeFlex="1"...>

to tell VSys to compare date/time values against each other only on the date, hours and minutes aspects of the values.

Throttling

To tell VSys to intentionally slow the data transfer process, the datapump tool supports a "Throttle" option:

[Magic] DatapumpThrottle=z

where z is the % slowdown to use; a value greater than zero causes VSys to pause, after each posted transaction, for z% of the time that that last transaction took to run. This is intended to allow datapump to run without bogging down the underlying database server.

Large transactions

To override the transaction size - the maximum number of records posted to the target database at once - set a multiplier in one of two places.

TransactionMultiplier in the <DATAPUMP> element settings file above, orVSys.ini file:[Magic] TransactionMultiplier=2.5Where TransactionMultiplier applied to either the explicit transaction size or the default one as appropriate. VSys will first look to the datapump settings file then fall back to the value in the VSys.ini file.

Why? VSys defaults to a "one-size-fits-all" transaction size that is unlikely to exceed the available memory in any given database server. If your database server has a very large amount of memory and is not particularly busy, using a very large transaction size pushes more data per transaction, improving performance of both network on disk, at the expense of using substantial additional temporary memory on the database server during the process.